#112 - The Bank That Cannot Learn

How legacy architecture turns AI from a compounding advantage into an expensive ornament

Illustration by Mary Mogoi

Hi all - This is the 112th edition of Frontier Fintech. A big thanks to my regular readers and subscribers. To those who are yet to subscribe, hit the subscribe button below and share with your colleagues and friends.

Announcing: Frontier Fintech Executive Search

Before this week’s article, a quick announcement.

I’ve partnered with Triage, a pan-African executive search firm, to offer executive search for African Digital Financial Services.

Why this exists:

The hardest leadership hires in African DFS aren’t hard because of a talent shortage. They’re hard because the roles demand people who can hold multiple contexts at once.

The Head of Revenue who combines scrappiness with commercial gravitas. The CTO with platform experience who can navigate a high-growth people environment. The banking veteran with a genuine tech and growth mindset. The senior leader who brings seniority without losing startup energy.

These profiles are rare. They don’t surface through keyword searches or credential matching.

What we’re doing:

Frontier Fintech brings ecosystem context - understanding what roles actually demand in your specific environment.

Triage brings leadership context - a structured Five Dimensions framework and rigorous search methodology that leaves no stone unturned.

Together: Ecosystem intelligence. Leadership science. Rigorous execution.

Areas of focus:

→ CTO and CPO → CFO and strategic finance → COO and operational leadership → Commercial leadership (Heads of Revenue, Sales Directors)

Interested?

Read more about the partnership and how it works here.

Or reach out directly: samora@frontierfintech.io

Now, on to this week’s article...

Introduction

A few weeks ago, I found myself at a breakfast meeting that I hadn’t quite expected to attend. HR leaders from some of Kenya’s most recognisable companies had gathered to hear a speaker discuss psychometric testing and how AI is reshaping talent management globally. I was the only non-HR person in the room, which perhaps explains why I experienced the morning slightly differently from everyone else.

The speaker opened with a disclaimer that has become something of a ritual in these gatherings, AI is really just a statistical model predicting the next word, so let’s not get carried away. He then spent the next forty minutes demonstrating, with genuine enthusiasm, how that same statistical model is quietly revolutionising how companies evaluate and develop their people. The contradiction didn’t seem to trouble anyone.

When the Q&A opened, the hands went up in a familiar sequence. Data governance. Accountability frameworks. Regulatory compliance around data privacy. All reasonable questions. All questions you could ask at virtually any technology briefing in corporate Nairobi, Lagos or Johannesburg without having absorbed a single word of the presentation. They’re the questions that signal engagement without actually requiring it, the corporate equivalent of looking both ways before crossing a street you have no intention of actually crossing.

I stood up and did something slightly different. I walked the room through how I actually use AI to run my business, the Claude projects I’ve built that function as a marketing team, a finance function, and a board of directors that includes a version of Munger, Buffett and Dangote that I can pressure-test ideas against. My point wasn’t to show off. It was simpler than that: AI is too consequential to engage with from the sidelines whilst sounding considered. It requires action. After the session ended, a HR leader from one of Kenya’s large banks caught me as I was leaving. He was candid in a way that people rarely are in professional settings. His board had mandated every department head to present an AI roadmap by end of Q1. He was struggling. Not because he lacked intelligence or commitment, but because he genuinely didn’t know where to start, and the clock was ticking.

Driving to my next meeting, I turned that conversation over in my mind. Here was a board doing exactly what boards should do, setting a clear strategic direction, creating accountability, driving urgency. And here was a capable leader caught between that mandate and a reality that the board’s directive couldn’t see from the boardroom: that even if every department head delivered a brilliant AI strategy tomorrow, the bank’s underlying technology infrastructure would quietly ensure that most of it remained theoretical.

I kept thinking about what I would do if I were the chair of that bank. I’d want to be hands-on, not delegating the AI question downward but acting as the coordinating intelligence across the institution, making sure the pieces connected. But then I remembered my own years in banking, and I knew that even the most engaged chair would eventually run into the same wall. The constraint isn’t leadership will. It isn’t budget, at least not primarily. It’s architecture.

That realisation is what this article is about. Banks cannot keep patching infrastructure that was never designed for what AI actually requires. A reckoning is at hand. The gap between a board mandate and a genuinely AI-native bank isn’t a strategy gap. It’s a structural one, and closing it demands a different kind of transformation than most banks are currently contemplating but may not have the courage or alignment to execute.

To make that case properly, I want to work through four things. First, the commercial imperative, why being AI-native is no longer a competitive advantage but a survival requirement. Second, how bank technology actually evolved and why its architecture is structurally hostile to everything AI needs. Third, what genuine transformation looks like in practice, drawing on global examples of banks that have made the transition to domain-driven architecture. And finally, what this means concretely for large and mid-sized African banks, not as a theoretical roadmap but as a set of honest choices with real trade-offs attached. I’m arguing that AI may be the thing that finally forces real technological change and not incremental upgrades on a stack that no longer makes sense.

AI - The Commercial Imperative

I’ve written about AI and banking before and argued that AI will have most impact at the experiential layer, i.e. it will have most impact from a customer experience perspective. The first era of fintech was fundamentally about efficiency, compressing the cost and friction of moving money. Banks like Equity and GT Bank built formidable competitive positions by mastering this transition faster than their peers. Fintechs like Flutterwave and M-Pesa created infrastructure layers that reshaped how economies move value. Making money move easier and at a much lower cost created valuable businesses and enabled financial inclusion at scale.

But efficiency is a race that reaches a floor. Once money moves instantly and cheaply, further speed gains produce diminishing returns. You cannot be more than instant. The infrastructure layer of banking is converging towards commodity. The competitive game of the next decade is about experience, making that experience so individually relevant and operationally superior that the gap between AI-native institutions and legacy ones becomes impossible to explain away to customers or shareholders.

This shift is already happening, and its most important characteristic is that the advantages it creates do not accumulate linearly. They compound.

The Compounding Machine

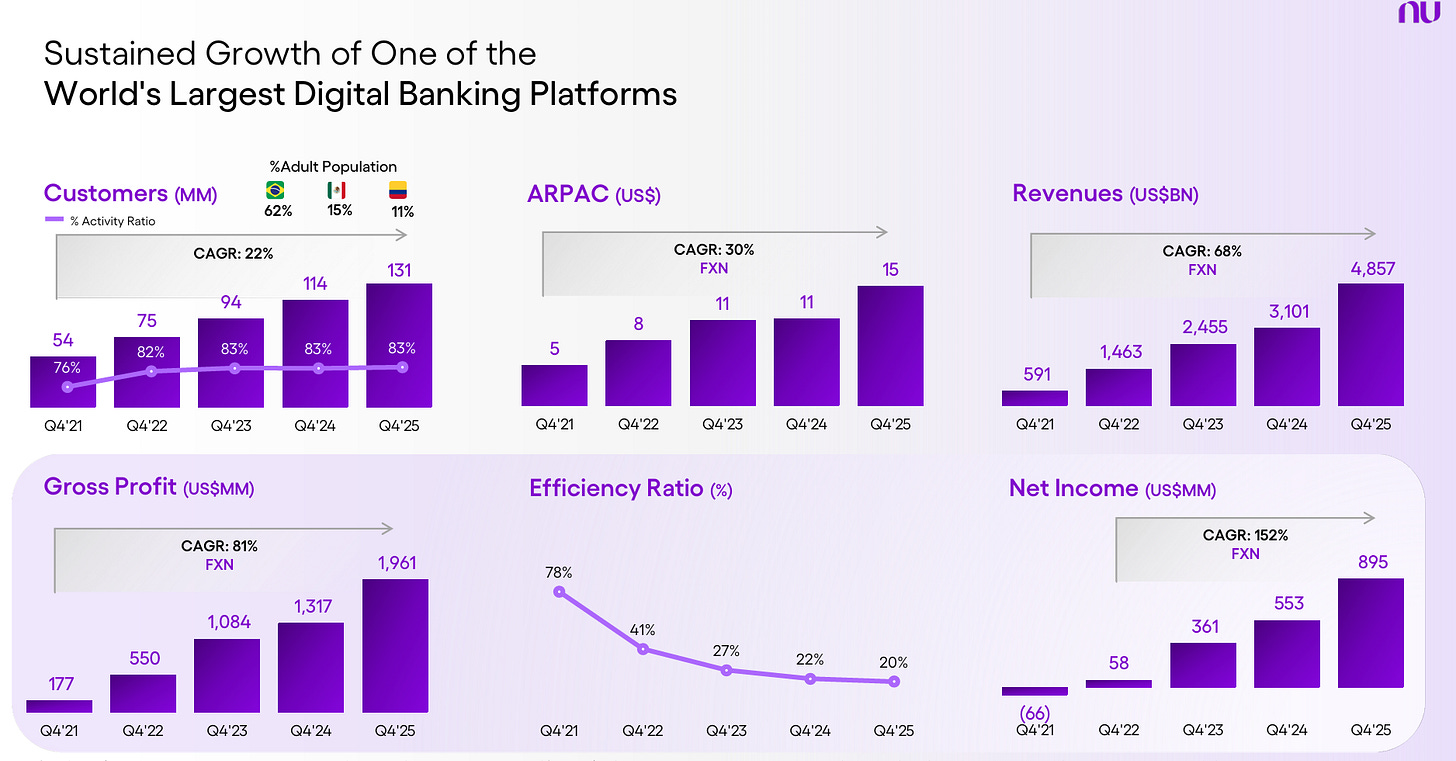

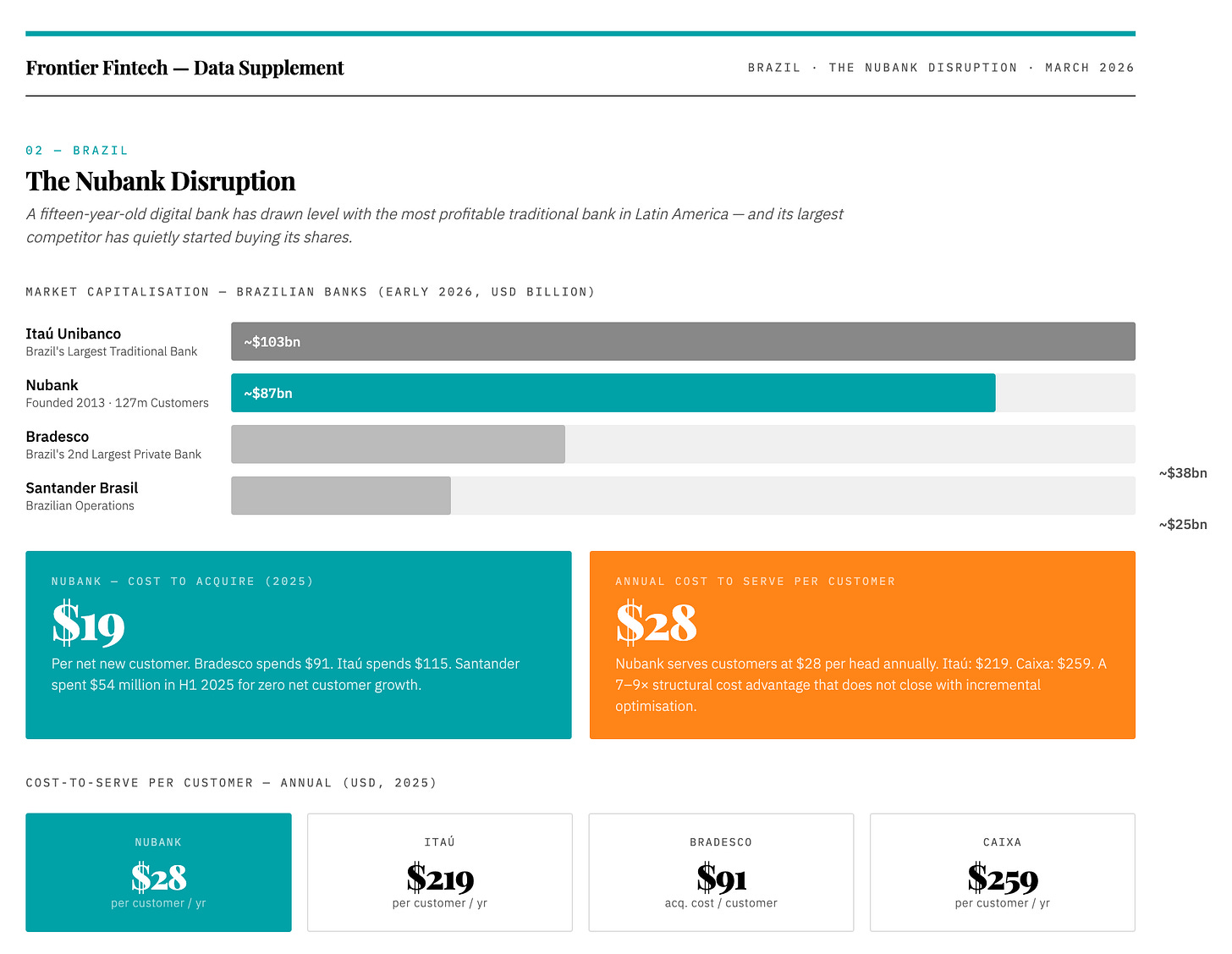

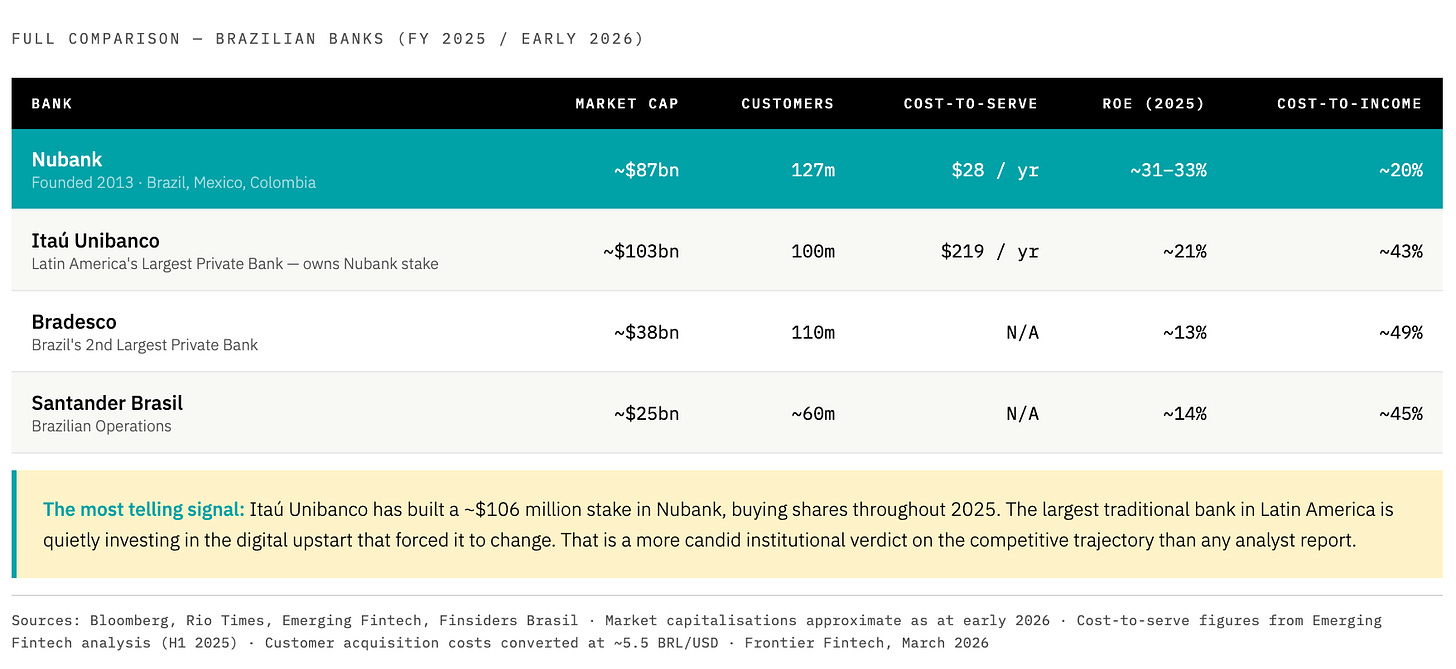

Source: Nubank Investor Relations

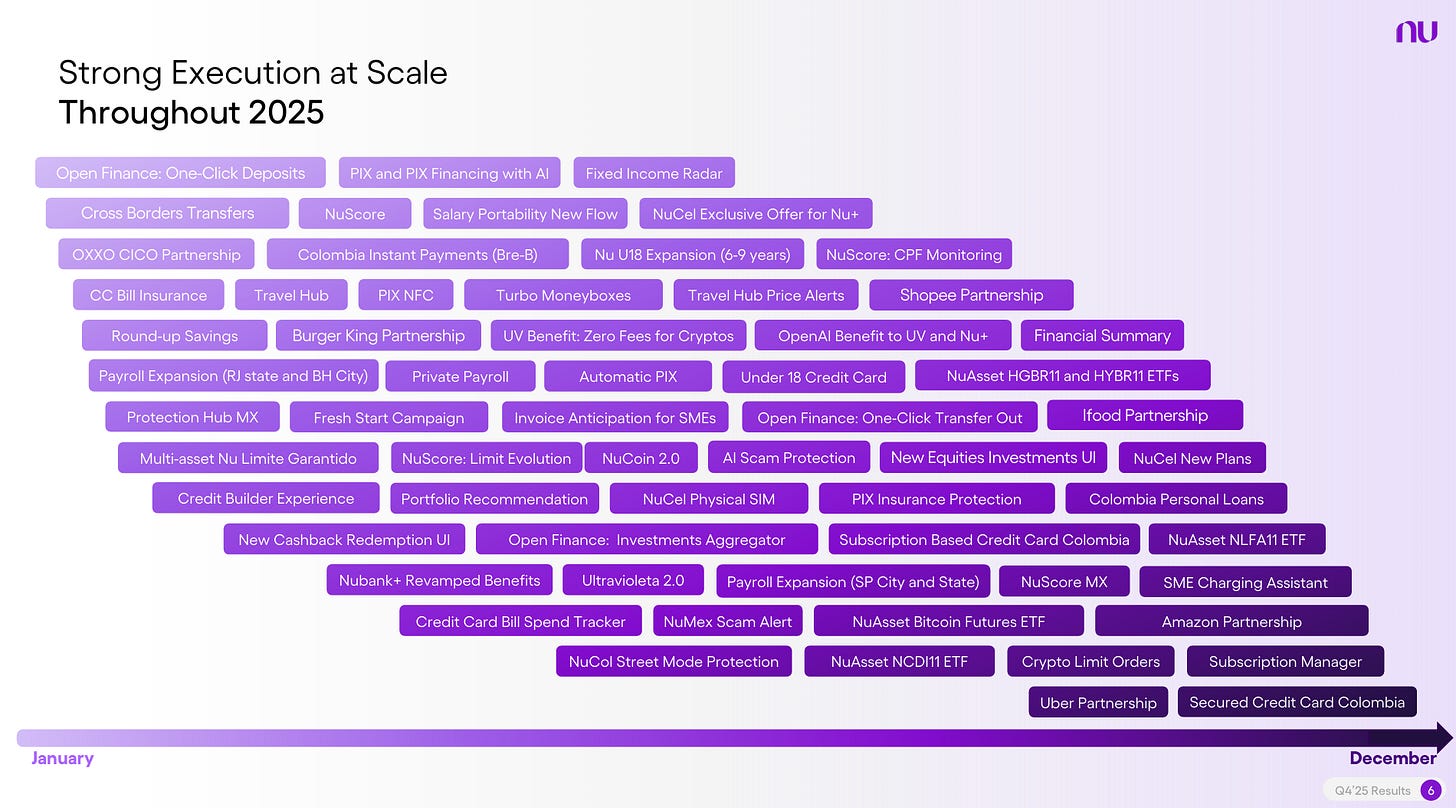

Nubank is the clearest available evidence of what compounding AI advantage looks like in financial services. The headline numbers are remarkable ; 127 million customers, $5 billion in quarterly revenue, a cost-to-income ratio of 20% against an industry where 50% is considered efficient. But the more important signal is in how David Vélez describes what is driving performance. Nubank spent the past twelve to fifteen months building nuFormer, their proprietary foundation model trained on 600 billion tokens of financial transaction data. The first application was to credit card limit policies in Brazil, delivering improvements approximately three times higher than a typical machine learning upgrade produces. It’s a similar insight that was shared by Kamal of Craft Silicon who’s been at the epicentre of digital transformation in Africa for over two decades. Natural Language Models produced outcomes that were orders of magnitude better than ML models in credit scoring. For Nubank, this means significant product velocity across both new products and significant improvements to existing products.

Source: Nubank Investor Relations

What makes this a compounding story is the runway still ahead. NuFormer has not yet been applied to new customer acquisition, deeper into lending, or to Mexico and Colombia. Each represents a discrete expansion of the same advantage across successively larger surfaces of the business. A bank on a legacy tech cannot replicate this, not because it can’t access the same models, but because the underlying data architecture makes execution impossible. The gap today is a gap in current performance. The more dangerous gap is in the rate at which performance improves. In essence, existing advantages will compound and comparisons in a few years time will be moot.

What the Experience Gap Will Actually Look Like

Consider two thought experiments.

The first is in corporate banking, a segment most incumbents consider their safest ground, insulated by relationships, complexity, and switching costs. That confidence may be misplaced. Imagine an AI-native bank deploying a treasury intelligence layer for a CFO managing cash across multiple African markets. Agents working continuously across yield maximisation, payment reconciliation, tax efficiency, and FX execution, calibrated to whatever metrics that specific corporate cares about most. Fintech 1.0 could not maximise this because it was based on deterministic and structured workflows. Now with natural language processing and understanding, AI’s can deliver nuanced knowledge. Problems that were not software specific are now solvable with technology. They can understand the nuance of managing FX in Ethiopia and Tanzania and align this with the cash management policies required by the HQ in London. They can understand that a specific payment is a partial payment for goods and automatically reconcile that payment ensuring that the accounts receivable department runs smoothly.

Now imagine that same CFO receiving a standard offer letter from their legacy bank, the same segmented pricing, the same generic product suite and worse, excuses about their inability to do point to point integrations between the bank and the ERP, all this delivered over a relationship lunch. They’ll politely explain what their current AI native bank already does for them. No amount of golf bridges that gap. What’s worse, corporates that experience this level of service will waive their own internal diversification policies to consolidate with the AI-native provider. The relationship moat doesn’t just erode. It becomes irrelevant.

Interestingly, this vector of attack is open to the Nubank and Moniepoint’s of this world. Who’s to say that they’ll stop at consumer and small business banking?

The second thought experiment is in SME banking. An AI-native institution prices at the individual level, dynamically adjusting rates and limits based on each customer’s lifetime value, repayment behaviour, and growth trajectory rather than broad segmentation. Imagine an SME that just received a US$ 5,000 deposit and wants to make a supplier payment of US$ 30, an AI native bank can waive the transfer fees on that small transfer based on analysing past deposit growth patterns and the LTV gains from increasing float income. A simple, “your next payments are on us” not only drives loyalty but increases switching costs. Word travels fast in SME communities. The consequence isn’t that customers compare you unfavourably to the AI-native bank. It’s that they stop comparing at all. It becomes a different category of service, like comparing a travel agent to having a personal concierge.

The Point of No Return

In 2010, the banks that dismissed mobile money as a product for the unbanked were making a locally rational decision. By 2015, they were watching M-Pesa intermediate their customer relationships from the payment layer upward, and the cost of buying back that positioning had compounded beyond what incremental investment could fix. The AI architecture decision of 2025 has the same structure. The banks that treat it as a technology project rather than a commercial imperative will find themselves in 2029 facing a gap that has closed off. The point is that, the CX differentials will be too big to close off and the client relationships could be lost forever. Bank boards will only respond when there are commercial incentives and the commercial outcomes are already happening in some markets.

The Last Stages of a 50 Year Tech Stack

The natural question at this point is: what stops a bank from simply acquiring the same AI capabilities? If Nubank can build nuFormer, why can’t Equity or Standard Bank build the equivalent? The answer isn’t budget, talent, or even strategic will. It’s the ground underneath the bank. You cannot run an AI-native operation on infrastructure that was designed before AI existed, not because the models won’t work, but because the architecture determines what the models are actually allowed to do. To understand why, it helps to go back to how bank technology was built in the first place, and what assumptions got baked into it that have never been revisited since.

I’ve written before about how the shift away from steam-powered factories towards those powered by electricity caused a massive productivity shift. However, this shift wasn’t immediate, there was a lag as factories had to readjust their entire set-ups to maximise the productivity gains. The design changes were based on the insights that electrical circuits enabled power to be delivered exactly where it was needed rather than a centralised steam engine.

With AI, Banking technology is at a similar inflection point, and most banks are still in the “replace the steam engine” phase.

Core banking systems were born in the 1960s and 1970s, built on IBM mainframes to solve a genuine problem: processing enormous transaction volumes that had previously been handled manually. The solution made sense for its time, a single centralised system handling everything, with batch processing that ran overnight. Transactions settled at the end of day because that was what the hardware could support.

The problem came later. That pragmatic compromise got packaged by vendors, extended across decades, and eventually treated as the correct way to build a bank. Temenos, Oracle FLEXCUBE, Finacle, each attempted to consolidate the sprawl by packaging more functionality into larger single systems. The pitch was understandable: one vendor, one contract, one system of record or as managers preferred, one neck to choke. For banks without large technology organisations, it made commercial sense. But the deeper these systems embedded themselves, the more expensive they became to change. Andrew Baker, CIO of Capitec, recently wrote that for any bank engaging in a multi-year core banking upgrade consuming hundreds of millions of dollars, the best case outcome is that nobody notices. That is a fair summary of the trap, in essence, there are barely any significant CX improvements.

Baker’s argument, worth reading in full, is that the monolith cannot serve modern banking because its different functions have fundamentally different technical requirements. Payments need to scale instantly when volumes spike. Lending needs to iterate quickly on decision logic as credit conditions change. Regulatory reporting needs an immutable audit trail. When all of these are bundled into a single system, changes to any one part require testing the entire system, which is why core banking upgrades have historically consumed entire technology departments for years.

The alternative that neobanks like Nubank, Monzo and Starling proved at scale is domain-driven architecture, where payments, lending, and identity each own their own data and can be scaled and changed independently. Domains communicate by publishing events in real time. Unlike traditional systems where everything is shared and functionally baked into one platform, they don’t require change freezes across the entire bank because the lending team wants to update a credit model. If you’re a nerd, then this article by Monzo makes for interesting reading.

This is where the connection to AI becomes concrete. An AI agent embedded within a lending domain can consume a continuous stream of relevant events, repayment behaviour, transaction patterns, cash flow signals, and refine its model against real outcomes as they happen. A fraud detection AI within the payments domain can ingest every transaction as it occurs and intervene before the ledger settles. Because each domain is self-contained, you can deploy a specialist AI within it without touching anything else in the bank. The architecture makes that possible in a way that a monolith structurally cannot. The outcome is captured in the image below;

Source: Programmer Humour - Adding AI Chatbots to Legacy Systems

Most banks are currently pursuing edge AI, attaching an AI layer to a legacy core through APIs and read-only data replicas. The AI can see what is happening but cannot act within the system where decisions are made. It observes rather than executes. The gap between a bank doing this and one running specialist AI agents within genuine domain architecture is not a gap in the sophistication of the models. It is a gap in the underlying plumbing that determines what the AI is actually allowed to do. This is what will create structural and compounding long-term differences in cost and customer experience. Note the 3x improvement in Nubank models that has enabled them to give “significantly” higher limits to some credit card customers. Stripe last year also talked about how deploying their AI. In their words;

We deployed our payments foundation model. By classifying sequences of embeddings, our detection rate for attacks on large users jumped from 59% to 97% overnight. This is a step change in performance. Previously we couldn’t take full advantage of our vast data, now we can.

This time it’s different, such gains in performance will be difficult to catch up with. In the last era of Fintech, the bank vendor industry responded to the growth of Neobanks by making a simple promise to banks. You don’t need to do any of the heavy lifting in terms of core transformation, we can reduce the core to a dumb ledger and wrap around an API layer on top of it. This way, we can develop digital banking platforms or engagement layers that will enable you to match the customer experience differential that Neobanks have. The industry let out a huge sigh of relief and contracts followed. Whereas the CX differential was largely sleek apps, this made tons of sense. Now, the CX differential will be hyper personalised banking, AI agents that take real time decisions based on specific parameters, significantly higher credit limits, significantly lower fraud and lower charges. It will be an economic difference that vendors may not be able to deliver on. Interestingly, most vendors are characteristically approaching the industry with the same language. Forget about your Core, we will wrap around an AI layer. My view is that these will be for chatbots and not the high-level agentic banking that we have described.

What the Markets and Saying and How Banks Should React

What the Market is Already Saying

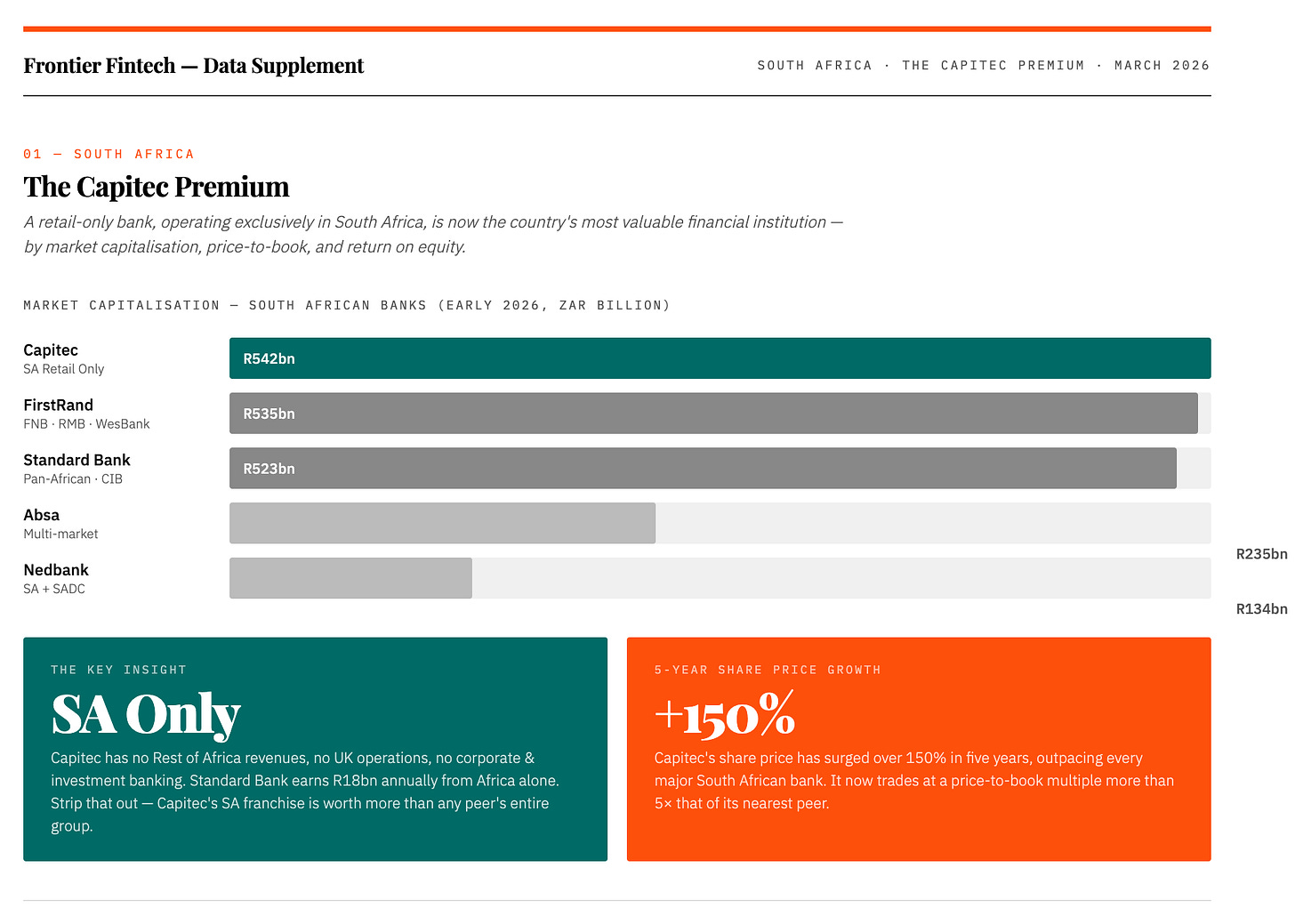

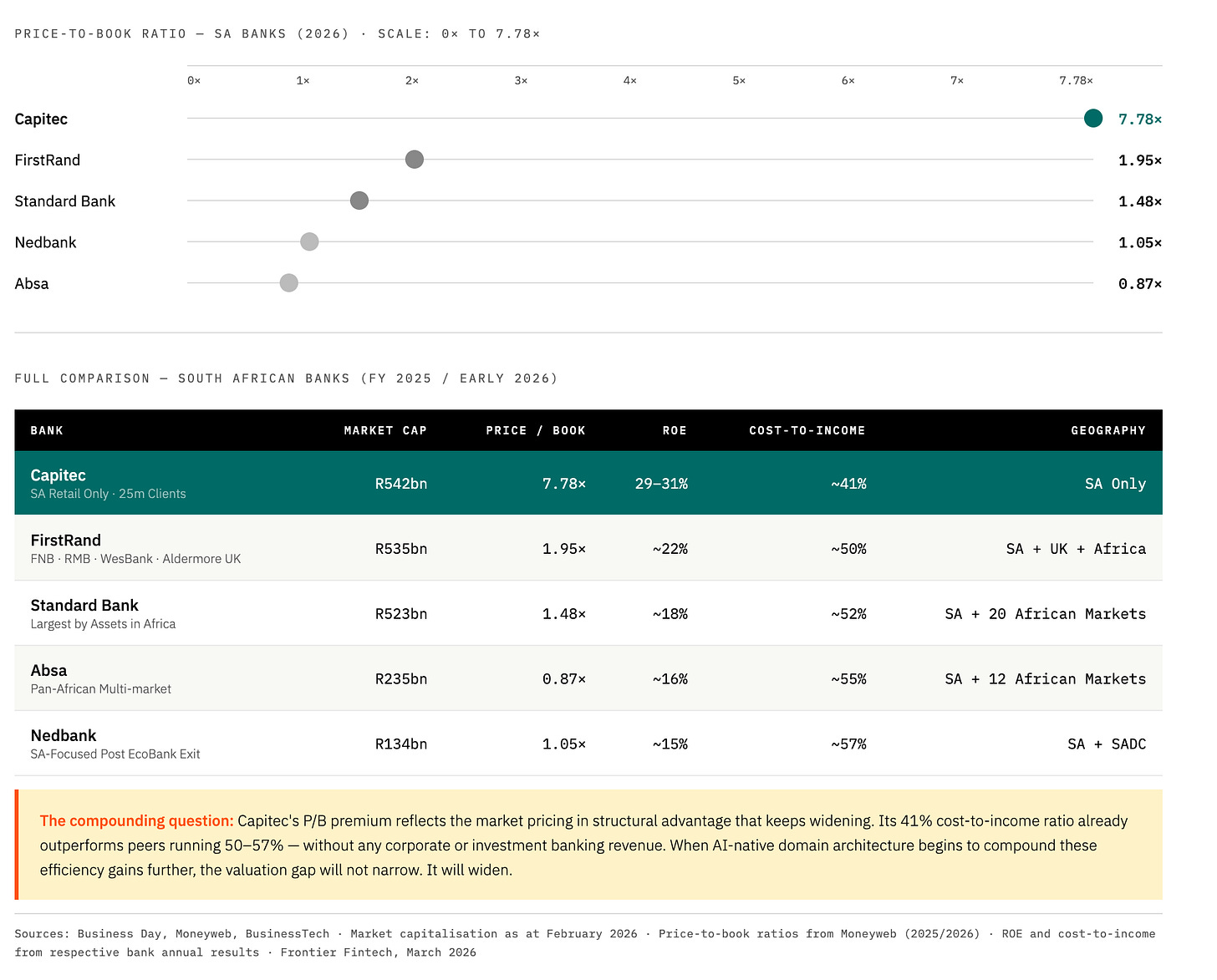

Markets are not always right in the short term, but over long periods they tend to price in things that analysts and executives are still debating. South Africa’s banking market has been sending one such signal.

As of early 2026, Capitec has overtaken FirstRand to become South Africa’s most valuable bank at approximately R542 billion. FirstRand sits at R535 billion, Standard Bank at R523 billion, then a significant drop to Absa at R235 billion and Nedbank at R134 billion. What makes Capitec’s position remarkable is that unlike its peers, it operates almost entirely within South Africa, no Rest of Africa revenues, no UK operations, no corporate and investment banking franchise. Standard Bank generates R18 billion in annual headline earnings from its Rest of Africa business alone. Stripped of those contributions, the market is saying that Capitec’s pure South African retail franchise is worth more than any other bank’s entire group operation.

The price-to-book ratios sharpen this further. Capitec trades at 7.78 times book value. Standard Bank at 1.48 times. Absa at 0.87 times. This is not just a verdict on today’s earnings. It is a bet on compounding, the market pricing in the expectation that Capitec’s structural advantages will continue to widen faster than its competitors can close them.

The Nubank data tells the same story from Brazil. Nubank currently sits at approximately $87 billion in market capitalisation, drawing level with Itaú Unibanco at $103 billion, a century-old institution still posting quarterly profits above $2 billion. Bradesco, Brazil’s second-largest private bank, sits at $38 billion. Most telling: Itaú has quietly been buying Nubank shares, which may be the most candid signal any incumbent has ever sent about where it believes the industry is going.

Neither Capitec nor Nubank has yet fully deployed AI-native architecture in the sense we have described in this article. When they do, these valuation differentials will widen further. The question for African banks is not whether this happens, but on which side of it they find themselves.

Intellectual Honesty First

Before discussing what banks should do, there is a conversation that needs to happen inside most African banking institutions, and rarely does.

There is a tendency in the industry to conflate machine learning with AI, and to present existing credit scoring models as evidence of genuine AI capability. They are not the same thing. Machine learning models are statistical tools that identify patterns in historical data and apply them forward. They do not learn continuously from live events, they cannot coordinate across domains, and they cannot adapt to conditions they have not encountered. A bank that believes it is already AI-native because it runs ML models is unlikely to make the architectural investments that genuine AI capability actually requires. “We already do AI” may be the most dangerous phrase in African banking technology today, it forecloses the honest assessment that might otherwise lead to action.

The right questions are simpler and more uncomfortable: Can the bank’s AI systems execute decisions, not just recommend them? Can they intervene in real time? Can they coordinate across domains without human mediation? If the answer is no, the institution is one that uses data well, which is a different thing, and a less defensible position than it appears.

Three Paths

For banks willing to accept that assessment, three paths are broadly available.

Rip and Replace is the most ambitious and the most consistently value-destroying. A full core migration typically takes three to five years and consumes hundreds of millions of dollars. The best case outcome, as Andrew Baker notes, is that nobody notices. Competitors who made different architectural choices have been shipping product improvements every sprint for the duration of the programme. Worse still, moving towards a newer version of Temenos is unlikely to achieve results. That’s why I think Sterling Bank in Nigeria is one to watch. They did a self-build and are reaping the benefits.

Adding AI modules and API layers on top of the existing monolith is what most vendor-led solutions currently offer. It produces something that looks like transformation and delays the reckoning by a few years, but the underlying architecture still cannot support autonomous AI execution. The technical debt compounds quietly in the background.

Image: A Strangler Fig Tree

The strangler fig - approach is slower, harder to fund, and the only one that I think could actually work. The aim is to move towards a domain-driven architecture that can work with AI and away from the monolithic or API-powered monolithic system. The strangler fig is a tree that grows around an existing host, gradually drawing its resources until the original structure hollows out and is no longer load-bearing. In banking, this means building new domains alongside the existing core rather than replacing it, migrating products and customer segments progressively, and shrinking the legacy footprint until it becomes a system of record rather than a system of intelligence. For instance, a bank can build a new SME proposition with a truly headless core provider and not an incumbent CBS, build specific domains across payments, lending fraud etc and move a chunk of SME banking to this new system. They’d continue like this over a period of time until the entirety of the bank is operating on this new infrastructure.

Conclusion

One honest caveat. The strangler fig approach requires sustained engineering investment and an organisation capable of building domain architecture rather than managing vendor relationships. For large African banks, that investment is within reach if the board commits to it. For smaller institutions operating on limited technology budgets, the path runs through partnership, finding core banking providers who have made the event streaming investment, or working with vendors offering domain capabilities as managed services. That market in Africa remains nascent, which is itself an argument for urgency.

I’ve thought about that HR leader a few times since that breakfast. His board did exactly what boards are supposed to do, set a strategic direction, create accountability, drive urgency. The mandate was right. The problem is that a mandate lands on whatever infrastructure is already there. You can decree AI transformation from a boardroom, but the decree cannot reach into a monolithic core and rewire it. The gap between what the board wants and what the bank can actually deliver isn’t a leadership gap. It’s an architectural one, and it has been accumulating for fifty years.

If I were the chair of that bank, I wouldn’t be asking for an AI roadmap by the end of Q1. I’d be asking one question: is our architecture one where AI can execute, or only one where it can observe? The answer to that question determines everything else, which vendors to believe, which pilots to fund, which promises to hold accountable. A bank that cannot answer it honestly will spend the next three years running impressive-looking AI programmes that leave the underlying constraint untouched.

The banks that close that gap now will set the terms on which everyone else has to compete. The ones that wait will find themselves, five years from now, doing an exceptional job of serving a market that has already moved on.